Commit

·

f0dca8e

verified

·

0

Parent(s):

Duplicate from allenai/OLMo-2-0325-32B-Instruct

Browse filesCo-authored-by: Shengyi Costa Huang <[email protected]>

- .gitattributes +38 -0

- README.md +222 -0

- config.json +28 -0

- generation_config.json +7 -0

- merges.txt +0 -0

- model-00001-of-00014.safetensors +3 -0

- model-00002-of-00014.safetensors +3 -0

- model-00003-of-00014.safetensors +3 -0

- model-00004-of-00014.safetensors +3 -0

- model-00005-of-00014.safetensors +3 -0

- model-00006-of-00014.safetensors +3 -0

- model-00007-of-00014.safetensors +3 -0

- model-00008-of-00014.safetensors +3 -0

- model-00009-of-00014.safetensors +3 -0

- model-00010-of-00014.safetensors +3 -0

- model-00011-of-00014.safetensors +3 -0

- model-00012-of-00014.safetensors +3 -0

- model-00013-of-00014.safetensors +3 -0

- model-00014-of-00014.safetensors +3 -0

- model.safetensors.index.json +714 -0

- olmo-32b-instruct-eval-curve.png +0 -0

- olmo-32b-instruct-full-eval-curve.png +3 -0

- olmo-32b-instruct-learning-curve-time.png +3 -0

- olmo-32b-instruct-learning-curve.png +3 -0

- special_tokens_map.json +30 -0

- tokenizer.json +0 -0

- tokenizer_config.json +190 -0

- vocab.json +0 -0

.gitattributes

ADDED

|

@@ -0,0 +1,38 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

*.7z filter=lfs diff=lfs merge=lfs -text

|

| 2 |

+

*.arrow filter=lfs diff=lfs merge=lfs -text

|

| 3 |

+

*.bin filter=lfs diff=lfs merge=lfs -text

|

| 4 |

+

*.bz2 filter=lfs diff=lfs merge=lfs -text

|

| 5 |

+

*.ckpt filter=lfs diff=lfs merge=lfs -text

|

| 6 |

+

*.ftz filter=lfs diff=lfs merge=lfs -text

|

| 7 |

+

*.gz filter=lfs diff=lfs merge=lfs -text

|

| 8 |

+

*.h5 filter=lfs diff=lfs merge=lfs -text

|

| 9 |

+

*.joblib filter=lfs diff=lfs merge=lfs -text

|

| 10 |

+

*.lfs.* filter=lfs diff=lfs merge=lfs -text

|

| 11 |

+

*.mlmodel filter=lfs diff=lfs merge=lfs -text

|

| 12 |

+

*.model filter=lfs diff=lfs merge=lfs -text

|

| 13 |

+

*.msgpack filter=lfs diff=lfs merge=lfs -text

|

| 14 |

+

*.npy filter=lfs diff=lfs merge=lfs -text

|

| 15 |

+

*.npz filter=lfs diff=lfs merge=lfs -text

|

| 16 |

+

*.onnx filter=lfs diff=lfs merge=lfs -text

|

| 17 |

+

*.ot filter=lfs diff=lfs merge=lfs -text

|

| 18 |

+

*.parquet filter=lfs diff=lfs merge=lfs -text

|

| 19 |

+

*.pb filter=lfs diff=lfs merge=lfs -text

|

| 20 |

+

*.pickle filter=lfs diff=lfs merge=lfs -text

|

| 21 |

+

*.pkl filter=lfs diff=lfs merge=lfs -text

|

| 22 |

+

*.pt filter=lfs diff=lfs merge=lfs -text

|

| 23 |

+

*.pth filter=lfs diff=lfs merge=lfs -text

|

| 24 |

+

*.rar filter=lfs diff=lfs merge=lfs -text

|

| 25 |

+

*.safetensors filter=lfs diff=lfs merge=lfs -text

|

| 26 |

+

saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

| 27 |

+

*.tar.* filter=lfs diff=lfs merge=lfs -text

|

| 28 |

+

*.tar filter=lfs diff=lfs merge=lfs -text

|

| 29 |

+

*.tflite filter=lfs diff=lfs merge=lfs -text

|

| 30 |

+

*.tgz filter=lfs diff=lfs merge=lfs -text

|

| 31 |

+

*.wasm filter=lfs diff=lfs merge=lfs -text

|

| 32 |

+

*.xz filter=lfs diff=lfs merge=lfs -text

|

| 33 |

+

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

+

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

+

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

olmo-32b-instruct-full-eval-curve.png filter=lfs diff=lfs merge=lfs -text

|

| 37 |

+

olmo-32b-instruct-learning-curve-time.png filter=lfs diff=lfs merge=lfs -text

|

| 38 |

+

olmo-32b-instruct-learning-curve.png filter=lfs diff=lfs merge=lfs -text

|

README.md

ADDED

|

@@ -0,0 +1,222 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

license: apache-2.0

|

| 3 |

+

language:

|

| 4 |

+

- en

|

| 5 |

+

datasets:

|

| 6 |

+

- allenai/RLVR-GSM-MATH-IF-Mixed-Constraints

|

| 7 |

+

base_model:

|

| 8 |

+

- allenai/OLMo-2-0325-32B-DPO

|

| 9 |

+

pipeline_tag: text-generation

|

| 10 |

+

library_name: transformers

|

| 11 |

+

---

|

| 12 |

+

|

| 13 |

+

<img alt="OLMo Logo" src="https://huggingface.co/datasets/allenai/blog-images/resolve/main/olmo2/olmo.png" width="242px">

|

| 14 |

+

|

| 15 |

+

OLMo 2 32B Instruct March 2025 is post-trained variant of the [OLMo-2 32B March 2025](https://huggingface.co/allenai/OLMo-2-0325-32B/) model, which has undergone supervised finetuning on an OLMo-specific variant of the [Tülu 3 dataset](https://huggingface.co/datasets/allenai/tulu-3-sft-olmo-2-mixture), further DPO training on [this dataset](https://huggingface.co/datasets/allenai/olmo-2-0325-32b-preference-mix), and final RLVR training on [this dataset](https://huggingface.co/datasets/allenai/RLVR-GSM-MATH-IF-Mixed-Constraints).

|

| 16 |

+

Tülu 3 is designed for state-of-the-art performance on a diversity of tasks in addition to chat, such as MATH, GSM8K, and IFEval.

|

| 17 |

+

Check out the [OLMo 2 paper](https://arxiv.org/abs/2501.00656) or [Tülu 3 paper](https://arxiv.org/abs/2411.15124) for more details!

|

| 18 |

+

|

| 19 |

+

OLMo is a series of **O**pen **L**anguage **Mo**dels designed to enable the science of language models.

|

| 20 |

+

These models are trained on the Dolma dataset. We are releasing all code, checkpoints, logs, and associated training details.

|

| 21 |

+

|

| 22 |

+

|

| 23 |

+

## Model description

|

| 24 |

+

|

| 25 |

+

- **Model type:** A model trained on a mix of publicly available, synthetic and human-created datasets.

|

| 26 |

+

- **Language(s) (NLP):** Primarily English

|

| 27 |

+

- **License:** Apache 2.0

|

| 28 |

+

- **Finetuned from model:** allenai/OLMo-2-0325-32B-DPO

|

| 29 |

+

|

| 30 |

+

### Model Sources

|

| 31 |

+

|

| 32 |

+

- **Project Page:** https://allenai.org/olmo

|

| 33 |

+

- **Repositories:**

|

| 34 |

+

- Core repo (training, inference, fine-tuning etc.): https://github.com/allenai/OLMo-core

|

| 35 |

+

- Evaluation code: https://github.com/allenai/olmes

|

| 36 |

+

- Further fine-tuning code: https://github.com/allenai/open-instruct

|

| 37 |

+

- **Paper:** https://arxiv.org/abs/2501.00656

|

| 38 |

+

- **Demo:** https://playground.allenai.org/

|

| 39 |

+

|

| 40 |

+

## Installation

|

| 41 |

+

|

| 42 |

+

OLMo 2 will be supported in the next version of Transformers, and you need to install it from the main branch using:

|

| 43 |

+

```bash

|

| 44 |

+

pip install --upgrade git+https://github.com/huggingface/transformers.git

|

| 45 |

+

```

|

| 46 |

+

|

| 47 |

+

## Using the model

|

| 48 |

+

|

| 49 |

+

### Loading with HuggingFace

|

| 50 |

+

|

| 51 |

+

To load the model with HuggingFace, use the following snippet:

|

| 52 |

+

```

|

| 53 |

+

from transformers import AutoModelForCausalLM

|

| 54 |

+

|

| 55 |

+

olmo_model = AutoModelForCausalLM.from_pretrained("allenai/OLMo-2-0325-32B-Instruct")

|

| 56 |

+

```

|

| 57 |

+

|

| 58 |

+

### Chat template

|

| 59 |

+

|

| 60 |

+

*NOTE: This is different than previous OLMo 2 and Tülu 3 models due to a minor change in configuration. It does NOT have the bos token before the rest. Our other models have <|endoftext|> at the beginning of the chat template.*

|

| 61 |

+

|

| 62 |

+

The chat template for our models is formatted as:

|

| 63 |

+

```

|

| 64 |

+

<|user|>\nHow are you doing?\n<|assistant|>\nI'm just a computer program, so I don't have feelings, but I'm functioning as expected. How can I assist you today?<|endoftext|>

|

| 65 |

+

```

|

| 66 |

+

Or with new lines expanded:

|

| 67 |

+

```

|

| 68 |

+

<|user|>

|

| 69 |

+

How are you doing?

|

| 70 |

+

<|assistant|>

|

| 71 |

+

I'm just a computer program, so I don't have feelings, but I'm functioning as expected. How can I assist you today?<|endoftext|>

|

| 72 |

+

```

|

| 73 |

+

It is embedded within the tokenizer as well, for `tokenizer.apply_chat_template`.

|

| 74 |

+

|

| 75 |

+

### System prompt

|

| 76 |

+

|

| 77 |

+

In Ai2 demos, we use this system prompt by default:

|

| 78 |

+

```

|

| 79 |

+

You are OLMo 2, a helpful and harmless AI Assistant built by the Allen Institute for AI.

|

| 80 |

+

```

|

| 81 |

+

The model has not been trained with a specific system prompt in mind.

|

| 82 |

+

|

| 83 |

+

### Intermediate Checkpoints

|

| 84 |

+

|

| 85 |

+

To facilitate research on RL finetuning, we have released our intermediate checkpoints during the model's RLVR training.

|

| 86 |

+

The model weights are saved every 20 training steps, and can be accessible in the revisions of the HuggingFace repository.

|

| 87 |

+

For example, you can load with:

|

| 88 |

+

```

|

| 89 |

+

olmo_model = AutoModelForCausalLM.from_pretrained("allenai/OLMo-2-0325-32B-Instruct", revision="step_200")

|

| 90 |

+

```

|

| 91 |

+

|

| 92 |

+

### Bias, Risks, and Limitations

|

| 93 |

+

|

| 94 |

+

The OLMo-2 models have limited safety training, but are not deployed automatically with in-the-loop filtering of responses like ChatGPT, so the model can produce problematic outputs (especially when prompted to do so).

|

| 95 |

+

See the Falcon 180B model card for an example of this.

|

| 96 |

+

|

| 97 |

+

|

| 98 |

+

## Performance

|

| 99 |

+

|

| 100 |

+

| Model | Average | AlpacaEval 2 LC | BBH | DROP | GSM8k | IFEval | MATH | MMLU | Safety | PopQA | TruthQA |

|

| 101 |

+

|-------|---------|------|-----|------|-------|--------|------|------|--------|-------|---------|

|

| 102 |

+

| **Closed API models** | | | | | | | | | | | |

|

| 103 |

+

| GPT-3.5 Turbo 0125 | 59.6 | 38.7 | 66.6 | 70.2 | 74.3 | 66.9 | 41.2 | 70.2 | 69.1 | 45.0 | 62.9 |

|

| 104 |

+

| GPT 4o Mini 2024-07-18 | 65.7 | 49.7 | 65.9 | 36.3 | 83.0 | 83.5 | 67.9 | 82.2 | 84.9 | 39.0 | 64.8 |

|

| 105 |

+

| **Open weights models** | | | | | | | | | | | |

|

| 106 |

+

| Mistral-Nemo-Instruct-2407 | 50.9 | 45.8 | 54.6 | 23.6 | 81.4 | 64.5 | 31.9 | 70.0 | 52.7 | 26.9 | 57.7 |

|

| 107 |

+

| Ministral-8B-Instruct | 52.1 | 31.4 | 56.2 | 56.2 | 80.0 | 56.4 | 40.0 | 68.5 | 56.2 | 20.2 | 55.5 |

|

| 108 |

+

| Gemma-2-27b-it | 61.3 | 49.0 | 72.7 | 67.5 | 80.7 | 63.2 | 35.1 | 70.7 | 75.9 | 33.9 | 64.6 |

|

| 109 |

+

| Qwen2.5-32B | 66.5 | 39.1 | 82.3 | 48.3 | 87.5 | 82.4 | 77.9 | 84.7 | 82.4 | 26.1 | 70.6 |

|

| 110 |

+

| Mistral-Small-24B | 67.6 | 43.2 | 80.1 | 78.5 | 87.2 | 77.3 | 65.9 | 83.7 | 66.5 | 24.4 | 68.1 |

|

| 111 |

+

| Llama-3.1-70B | 70.0 | 32.9 | 83.0 | 77.0 | 94.5 | 88.0 | 56.2 | 85.2 | 76.4 | 46.5 | 66.8 |

|

| 112 |

+

| Llama-3.3-70B | 73.0 | 36.5 | 85.8 | 78.0 | 93.6 | 90.8 | 71.8 | 85.9 | 70.4 | 48.2 | 66.1 |

|

| 113 |

+

| Gemma-3-27b-it | - | 63.4 | 83.7 | 69.2 | 91.1 | - | - | 81.8 | - | 30.9 | - |

|

| 114 |

+

| **Fully open models** | | | | | | | | | | | |

|

| 115 |

+

| OLMo-2-7B-1124-Instruct | 55.7 | 31.0 | 48.5 | 58.9 | 85.2 | 75.6 | 31.3 | 63.9 | 81.2 | 24.6 | 56.3 |

|

| 116 |

+

| OLMo-2-13B-1124-Instruct | 61.4 | 37.5 | 58.4 | 72.1 | 87.4 | 80.4 | 39.7 | 68.6 | 77.5 | 28.8 | 63.9 |

|

| 117 |

+

| **OLMo-2-32B-0325-SFT** | 61.7 | 16.9 | 69.7 | 77.2 | 78.4 | 72.4 | 35.9 | 76.1 | 93.8 | 35.4 | 61.3 |

|

| 118 |

+

| **OLMo-2-32B-0325-DPO** | 68.8 | 44.1 | 70.2 | 77.5 | 85.7 | 83.8 | 46.8 | 78.0 | 91.9 | 36.4 | 73.5 |

|

| 119 |

+

| **OLMo-2-32B-0325-Instruct** | 68.8 | 42.8 | 70.6 | 78.0 | 87.6 | 85.6 | 49.7 | 77.3 | 85.9 | 37.5 | 73.2 |

|

| 120 |

+

|

| 121 |

+

|

| 122 |

+

|

| 123 |

+

|

| 124 |

+

## Learning curves

|

| 125 |

+

|

| 126 |

+

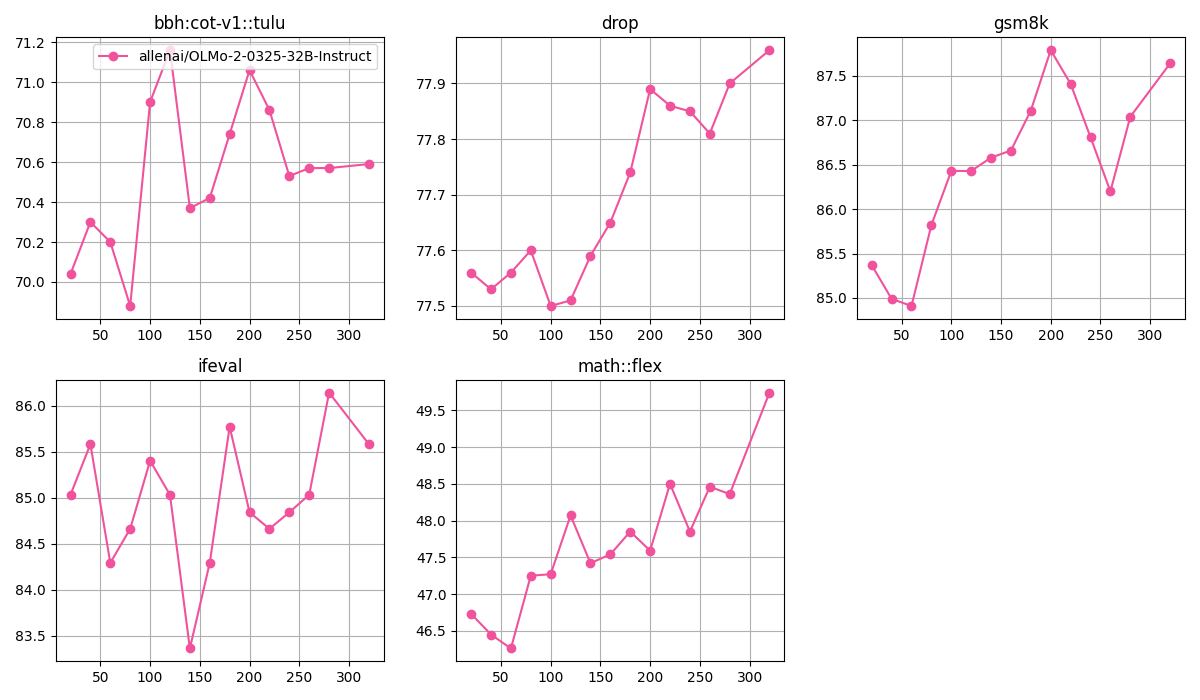

Below is the training curves for `allenai/OLMo-2-0325-32B-Instruct`. The model was trained using 5 8xH100 nodes.

|

| 127 |

+

|

| 128 |

+

|

| 129 |

+

|

| 130 |

+

|

| 131 |

+

|

| 132 |

+

Below are the core eval scores over steps for `allenai/OLMo-2-0325-32B-Instruct` (note we took step `320` as the final checkpoint, corresponding to episode `573,440`):

|

| 133 |

+

|

| 134 |

+

|

| 135 |

+

|

| 136 |

+

Below are the other eval scores over steps for `allenai/OLMo-2-0325-32B-Instruct`:

|

| 137 |

+

|

| 138 |

+

|

| 139 |

+

|

| 140 |

+

|

| 141 |

+

## Reproduction command

|

| 142 |

+

|

| 143 |

+

The command below is copied directly from the tracked training job:

|

| 144 |

+

|

| 145 |

+

```bash

|

| 146 |

+

# clone and check out commit

|

| 147 |

+

git clone https://github.com/allenai/open-instruct.git

|

| 148 |

+

# this should be the correct commit, the main thing is to have the vllm monkey patch for

|

| 149 |

+

# 32b olmo https://github.com/allenai/open-instruct/blob/894ffa236319bc6c26c346240a7e4ee04ba0bd31/open_instruct/vllm_utils2.py#L37-L59

|

| 150 |

+

git checkout a51dc98525eec01de6e8a24c071f42dce407d738

|

| 151 |

+

uv sync

|

| 152 |

+

uv sync --extra compile

|

| 153 |

+

|

| 154 |

+

# note that you may need 5 8xH100 nodes for the training.

|

| 155 |

+

# so please setup ray properly, e.g., https://github.com/allenai/open-instruct/blob/main/docs/tulu3.md#llama-31-tulu-3-70b-reproduction

|

| 156 |

+

python open_instruct/grpo_vllm_thread_ray_gtrl.py \

|

| 157 |

+

--exp_name 0310_olmo2_32b_grpo_12818 \

|

| 158 |

+

--beta 0.01 \

|

| 159 |

+

--local_mini_batch_size 32 \

|

| 160 |

+

--number_samples_per_prompt 16 \

|

| 161 |

+

--output_dir output \

|

| 162 |

+

--local_rollout_batch_size 4 \

|

| 163 |

+

--kl_estimator kl3 \

|

| 164 |

+

--learning_rate 5e-7 \

|

| 165 |

+

--dataset_mixer_list allenai/RLVR-GSM-MATH-IF-Mixed-Constraints 1.0 \

|

| 166 |

+

--dataset_mixer_list_splits train \

|

| 167 |

+

--dataset_mixer_eval_list allenai/RLVR-GSM-MATH-IF-Mixed-Constraints 16 \

|

| 168 |

+

--dataset_mixer_eval_list_splits train \

|

| 169 |

+

--max_token_length 2048 \

|

| 170 |

+

--max_prompt_token_length 2048 \

|

| 171 |

+

--response_length 2048 \

|

| 172 |

+

--model_name_or_path allenai/OLMo-2-0325-32B-DPO \

|

| 173 |

+

--non_stop_penalty \

|

| 174 |

+

--stop_token eos \

|

| 175 |

+

--temperature 1.0 \

|

| 176 |

+

--ground_truths_key ground_truth \

|

| 177 |

+

--chat_template_name tulu \

|

| 178 |

+

--sft_messages_key messages \

|

| 179 |

+

--eval_max_length 4096 \

|

| 180 |

+

--total_episodes 10000000 \

|

| 181 |

+

--penalty_reward_value 0.0 \

|

| 182 |

+

--deepspeed_stage 3 \

|

| 183 |

+

--no_gather_whole_model \

|

| 184 |

+

--per_device_train_batch_size 2 \

|

| 185 |

+

--local_rollout_forward_batch_size 2 \

|

| 186 |

+

--actor_num_gpus_per_node 8 8 8 4 \

|

| 187 |

+

--num_epochs 1 \

|

| 188 |

+

--vllm_tensor_parallel_size 1 \

|

| 189 |

+

--vllm_num_engines 12 \

|

| 190 |

+

--lr_scheduler_type constant \

|

| 191 |

+

--apply_verifiable_reward true \

|

| 192 |

+

--seed 1 \

|

| 193 |

+

--num_evals 30 \

|

| 194 |

+

--save_freq 20 \

|

| 195 |

+

--reward_model_multiplier 0.0 \

|

| 196 |

+

--no_try_launch_beaker_eval_jobs \

|

| 197 |

+

--try_launch_beaker_eval_jobs_on_weka \

|

| 198 |

+

--gradient_checkpointing \

|

| 199 |

+

--with_tracking

|

| 200 |

+

```

|

| 201 |

+

|

| 202 |

+

|

| 203 |

+

## License and use

|

| 204 |

+

|

| 205 |

+

OLMo 2 is licensed under the Apache 2.0 license.

|

| 206 |

+

OLMo 2 is intended for research and educational use.

|

| 207 |

+

For more information, please see our [Responsible Use Guidelines](https://allenai.org/responsible-use).

|

| 208 |

+

This model has been fine-tuned using a dataset mix with outputs generated from third party models and are subject to additional terms: [Gemma Terms of Use](https://ai.google.dev/gemma/terms).

|

| 209 |

+

|

| 210 |

+

## Citation

|

| 211 |

+

|

| 212 |

+

```bibtex

|

| 213 |

+

@article{olmo20242olmo2furious,

|

| 214 |

+

title={2 OLMo 2 Furious},

|

| 215 |

+

author={Team OLMo and Pete Walsh and Luca Soldaini and Dirk Groeneveld and Kyle Lo and Shane Arora and Akshita Bhagia and Yuling Gu and Shengyi Huang and Matt Jordan and Nathan Lambert and Dustin Schwenk and Oyvind Tafjord and Taira Anderson and David Atkinson and Faeze Brahman and Christopher Clark and Pradeep Dasigi and Nouha Dziri and Michal Guerquin and Hamish Ivison and Pang Wei Koh and Jiacheng Liu and Saumya Malik and William Merrill and Lester James V. Miranda and Jacob Morrison and Tyler Murray and Crystal Nam and Valentina Pyatkin and Aman Rangapur and Michael Schmitz and Sam Skjonsberg and David Wadden and Christopher Wilhelm and Michael Wilson and Luke Zettlemoyer and Ali Farhadi and Noah A. Smith and Hannaneh Hajishirzi},

|

| 216 |

+

year={2024},

|

| 217 |

+

eprint={2501.00656},

|

| 218 |

+

archivePrefix={arXiv},

|

| 219 |

+

primaryClass={cs.CL},

|

| 220 |

+

url={https://arxiv.org/abs/2501.00656},

|

| 221 |

+

}

|

| 222 |

+

```

|

config.json

ADDED

|

@@ -0,0 +1,28 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_name_or_path": "allenai/open_instruct_dev",

|

| 3 |

+

"architectures": [

|

| 4 |

+

"Olmo2ForCausalLM"

|

| 5 |

+

],

|

| 6 |

+

"attention_bias": false,

|

| 7 |

+

"attention_dropout": 0.0,

|

| 8 |

+

"bos_token_id": 100257,

|

| 9 |

+

"eos_token_id": 100257,

|

| 10 |

+

"hidden_act": "silu",

|

| 11 |

+

"hidden_size": 5120,

|

| 12 |

+

"initializer_range": 0.02,

|

| 13 |

+

"intermediate_size": 27648,

|

| 14 |

+

"max_position_embeddings": 4096,

|

| 15 |

+

"model_type": "olmo2",

|

| 16 |

+

"num_attention_heads": 40,

|

| 17 |

+

"num_hidden_layers": 64,

|

| 18 |

+

"num_key_value_heads": 8,

|

| 19 |

+

"pad_token_id": 100277,

|

| 20 |

+

"rms_norm_eps": 1e-06,

|

| 21 |

+

"rope_scaling": null,

|

| 22 |

+

"rope_theta": 500000,

|

| 23 |

+

"tie_word_embeddings": false,

|

| 24 |

+

"torch_dtype": "bfloat16",

|

| 25 |

+

"transformers_version": "4.49.0",

|

| 26 |

+

"use_cache": false,

|

| 27 |

+

"vocab_size": 100352

|

| 28 |

+

}

|

generation_config.json

ADDED

|

@@ -0,0 +1,7 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_from_model_config": true,

|

| 3 |

+

"bos_token_id": 100257,

|

| 4 |

+

"eos_token_id": 100257,

|

| 5 |

+

"pad_token_id": 100277,

|

| 6 |

+

"transformers_version": "4.49.0"

|

| 7 |

+

}

|

merges.txt

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

model-00001-of-00014.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:d539da18a12ce2b7985e4b255e707956ee67e67105e3f4f215071f2ec612c1e1

|

| 3 |

+

size 4991358312

|

model-00002-of-00014.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:5465b5c0e0ec170bf457c7af852b4887f9a13d603f13a52e6f2a3737b7ae8aa1

|

| 3 |

+

size 4938975944

|

model-00003-of-00014.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:0b6e37dc85b5a0d0360dc461ed172d3c3ed907cb90aba821636ce5c0bb429b73

|

| 3 |

+

size 4876048688

|

model-00004-of-00014.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:f52a93bd70044cabd8729ad9583ef6d96c6528e8765e52abad4e2d1c9a608e9c

|

| 3 |

+

size 4876048704

|

model-00005-of-00014.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:997245db5a143d21abf2806ad9be4ac8c2c3c9dede5f07315bd05d522dd50dcb

|

| 3 |

+

size 4876048704

|

model-00006-of-00014.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:98c2042bc4f9310afd36bf6152501fdf886d03764b60cf4eafc7f69d19c8b6e9

|

| 3 |

+

size 4876048704

|

model-00007-of-00014.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:423d9b94bdb2c424ea5670f87a3313626b09d84be0a237eddd9117ad6e21c1f0

|

| 3 |

+

size 4876048704

|

model-00008-of-00014.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:a3cd577278dfceb80a8aa972594a75d406e17283c5240a12ca9effb0b8d70f8a

|

| 3 |

+

size 4876048704

|

model-00009-of-00014.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:3a8c9d3d0fe6f1087cf87573861a6d055ff3ede0f6816b97f77cd83975024eeb

|

| 3 |

+

size 4876048704

|

model-00010-of-00014.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:45eb53627f4a8ba1edf53239c5d8ac57c77744f8500ac12611bd6818a8de132a

|

| 3 |

+

size 4876048704

|

model-00011-of-00014.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:5a1bd367c7ca31ded74cfc45c6315ac309088016d75b89dca950f9040affb25b

|

| 3 |

+

size 4876048704

|

model-00012-of-00014.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:0f5bea3b50b29bf0e9ccfc137415ae86df332569922c8d9ba17275adf9d7645e

|

| 3 |

+

size 4876048704

|

model-00013-of-00014.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:2176fb4ceff37537dce4c7cc3b03e729576d91a58b60f68b353270b706345c7f

|

| 3 |

+

size 4750216928

|

model-00014-of-00014.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:5d809a3c77c3757307669557ca455d28a29c9b38864fbe0b236f4d9f7b2cbe86

|

| 3 |

+

size 1027604608

|

model.safetensors.index.json

ADDED

|

@@ -0,0 +1,714 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"metadata": {

|

| 3 |

+

"total_size": 64468559872

|

| 4 |

+

},

|

| 5 |

+

"weight_map": {

|

| 6 |

+

"lm_head.weight": "model-00014-of-00014.safetensors",

|

| 7 |

+

"model.embed_tokens.weight": "model-00001-of-00014.safetensors",

|

| 8 |

+

"model.layers.0.mlp.down_proj.weight": "model-00001-of-00014.safetensors",

|

| 9 |

+

"model.layers.0.mlp.gate_proj.weight": "model-00001-of-00014.safetensors",

|

| 10 |

+

"model.layers.0.mlp.up_proj.weight": "model-00001-of-00014.safetensors",

|

| 11 |

+

"model.layers.0.post_attention_layernorm.weight": "model-00001-of-00014.safetensors",

|

| 12 |

+

"model.layers.0.post_feedforward_layernorm.weight": "model-00001-of-00014.safetensors",

|

| 13 |

+

"model.layers.0.self_attn.k_norm.weight": "model-00001-of-00014.safetensors",

|

| 14 |

+

"model.layers.0.self_attn.k_proj.weight": "model-00001-of-00014.safetensors",

|

| 15 |

+

"model.layers.0.self_attn.o_proj.weight": "model-00001-of-00014.safetensors",

|

| 16 |

+

"model.layers.0.self_attn.q_norm.weight": "model-00001-of-00014.safetensors",

|

| 17 |

+

"model.layers.0.self_attn.q_proj.weight": "model-00001-of-00014.safetensors",

|

| 18 |

+

"model.layers.0.self_attn.v_proj.weight": "model-00001-of-00014.safetensors",

|

| 19 |

+

"model.layers.1.mlp.down_proj.weight": "model-00001-of-00014.safetensors",

|

| 20 |

+

"model.layers.1.mlp.gate_proj.weight": "model-00001-of-00014.safetensors",

|

| 21 |

+

"model.layers.1.mlp.up_proj.weight": "model-00001-of-00014.safetensors",

|

| 22 |

+

"model.layers.1.post_attention_layernorm.weight": "model-00001-of-00014.safetensors",

|

| 23 |

+

"model.layers.1.post_feedforward_layernorm.weight": "model-00001-of-00014.safetensors",

|

| 24 |

+

"model.layers.1.self_attn.k_norm.weight": "model-00001-of-00014.safetensors",

|

| 25 |

+

"model.layers.1.self_attn.k_proj.weight": "model-00001-of-00014.safetensors",

|

| 26 |

+

"model.layers.1.self_attn.o_proj.weight": "model-00001-of-00014.safetensors",

|

| 27 |

+

"model.layers.1.self_attn.q_norm.weight": "model-00001-of-00014.safetensors",

|

| 28 |

+

"model.layers.1.self_attn.q_proj.weight": "model-00001-of-00014.safetensors",

|

| 29 |

+

"model.layers.1.self_attn.v_proj.weight": "model-00001-of-00014.safetensors",

|

| 30 |

+

"model.layers.10.mlp.down_proj.weight": "model-00003-of-00014.safetensors",

|

| 31 |

+

"model.layers.10.mlp.gate_proj.weight": "model-00003-of-00014.safetensors",

|

| 32 |

+

"model.layers.10.mlp.up_proj.weight": "model-00003-of-00014.safetensors",

|

| 33 |

+

"model.layers.10.post_attention_layernorm.weight": "model-00003-of-00014.safetensors",

|

| 34 |

+

"model.layers.10.post_feedforward_layernorm.weight": "model-00003-of-00014.safetensors",

|

| 35 |

+

"model.layers.10.self_attn.k_norm.weight": "model-00003-of-00014.safetensors",

|

| 36 |

+

"model.layers.10.self_attn.k_proj.weight": "model-00003-of-00014.safetensors",

|

| 37 |

+

"model.layers.10.self_attn.o_proj.weight": "model-00003-of-00014.safetensors",

|

| 38 |

+

"model.layers.10.self_attn.q_norm.weight": "model-00003-of-00014.safetensors",

|

| 39 |

+

"model.layers.10.self_attn.q_proj.weight": "model-00003-of-00014.safetensors",

|

| 40 |

+

"model.layers.10.self_attn.v_proj.weight": "model-00003-of-00014.safetensors",

|

| 41 |

+

"model.layers.11.mlp.down_proj.weight": "model-00003-of-00014.safetensors",

|

| 42 |

+

"model.layers.11.mlp.gate_proj.weight": "model-00003-of-00014.safetensors",

|

| 43 |

+

"model.layers.11.mlp.up_proj.weight": "model-00003-of-00014.safetensors",

|

| 44 |

+

"model.layers.11.post_attention_layernorm.weight": "model-00003-of-00014.safetensors",

|

| 45 |

+

"model.layers.11.post_feedforward_layernorm.weight": "model-00003-of-00014.safetensors",

|

| 46 |

+

"model.layers.11.self_attn.k_norm.weight": "model-00003-of-00014.safetensors",

|

| 47 |

+

"model.layers.11.self_attn.k_proj.weight": "model-00003-of-00014.safetensors",

|

| 48 |

+

"model.layers.11.self_attn.o_proj.weight": "model-00003-of-00014.safetensors",

|

| 49 |

+

"model.layers.11.self_attn.q_norm.weight": "model-00003-of-00014.safetensors",

|

| 50 |

+

"model.layers.11.self_attn.q_proj.weight": "model-00003-of-00014.safetensors",

|

| 51 |

+

"model.layers.11.self_attn.v_proj.weight": "model-00003-of-00014.safetensors",

|

| 52 |

+

"model.layers.12.mlp.down_proj.weight": "model-00003-of-00014.safetensors",

|

| 53 |

+

"model.layers.12.mlp.gate_proj.weight": "model-00003-of-00014.safetensors",

|

| 54 |

+

"model.layers.12.mlp.up_proj.weight": "model-00003-of-00014.safetensors",

|

| 55 |

+

"model.layers.12.post_attention_layernorm.weight": "model-00003-of-00014.safetensors",

|

| 56 |

+

"model.layers.12.post_feedforward_layernorm.weight": "model-00003-of-00014.safetensors",

|

| 57 |

+

"model.layers.12.self_attn.k_norm.weight": "model-00003-of-00014.safetensors",

|

| 58 |

+

"model.layers.12.self_attn.k_proj.weight": "model-00003-of-00014.safetensors",

|

| 59 |

+

"model.layers.12.self_attn.o_proj.weight": "model-00003-of-00014.safetensors",

|

| 60 |

+

"model.layers.12.self_attn.q_norm.weight": "model-00003-of-00014.safetensors",

|

| 61 |

+

"model.layers.12.self_attn.q_proj.weight": "model-00003-of-00014.safetensors",

|

| 62 |

+

"model.layers.12.self_attn.v_proj.weight": "model-00003-of-00014.safetensors",

|

| 63 |

+

"model.layers.13.mlp.down_proj.weight": "model-00003-of-00014.safetensors",

|

| 64 |

+

"model.layers.13.mlp.gate_proj.weight": "model-00003-of-00014.safetensors",

|

| 65 |

+

"model.layers.13.mlp.up_proj.weight": "model-00003-of-00014.safetensors",

|

| 66 |

+

"model.layers.13.post_attention_layernorm.weight": "model-00003-of-00014.safetensors",

|

| 67 |

+

"model.layers.13.post_feedforward_layernorm.weight": "model-00003-of-00014.safetensors",

|

| 68 |

+

"model.layers.13.self_attn.k_norm.weight": "model-00003-of-00014.safetensors",

|

| 69 |

+

"model.layers.13.self_attn.k_proj.weight": "model-00003-of-00014.safetensors",

|

| 70 |

+

"model.layers.13.self_attn.o_proj.weight": "model-00003-of-00014.safetensors",

|

| 71 |

+

"model.layers.13.self_attn.q_norm.weight": "model-00003-of-00014.safetensors",

|

| 72 |

+

"model.layers.13.self_attn.q_proj.weight": "model-00003-of-00014.safetensors",

|

| 73 |

+

"model.layers.13.self_attn.v_proj.weight": "model-00003-of-00014.safetensors",

|

| 74 |

+

"model.layers.14.mlp.down_proj.weight": "model-00004-of-00014.safetensors",

|

| 75 |

+

"model.layers.14.mlp.gate_proj.weight": "model-00004-of-00014.safetensors",

|

| 76 |

+

"model.layers.14.mlp.up_proj.weight": "model-00004-of-00014.safetensors",

|

| 77 |

+

"model.layers.14.post_attention_layernorm.weight": "model-00004-of-00014.safetensors",

|

| 78 |

+

"model.layers.14.post_feedforward_layernorm.weight": "model-00004-of-00014.safetensors",

|

| 79 |

+

"model.layers.14.self_attn.k_norm.weight": "model-00003-of-00014.safetensors",

|

| 80 |

+

"model.layers.14.self_attn.k_proj.weight": "model-00003-of-00014.safetensors",

|

| 81 |

+

"model.layers.14.self_attn.o_proj.weight": "model-00003-of-00014.safetensors",

|

| 82 |

+

"model.layers.14.self_attn.q_norm.weight": "model-00003-of-00014.safetensors",

|

| 83 |

+

"model.layers.14.self_attn.q_proj.weight": "model-00003-of-00014.safetensors",

|

| 84 |

+

"model.layers.14.self_attn.v_proj.weight": "model-00003-of-00014.safetensors",

|

| 85 |

+

"model.layers.15.mlp.down_proj.weight": "model-00004-of-00014.safetensors",

|

| 86 |

+

"model.layers.15.mlp.gate_proj.weight": "model-00004-of-00014.safetensors",

|

| 87 |

+

"model.layers.15.mlp.up_proj.weight": "model-00004-of-00014.safetensors",

|

| 88 |

+

"model.layers.15.post_attention_layernorm.weight": "model-00004-of-00014.safetensors",

|

| 89 |

+

"model.layers.15.post_feedforward_layernorm.weight": "model-00004-of-00014.safetensors",

|

| 90 |

+

"model.layers.15.self_attn.k_norm.weight": "model-00004-of-00014.safetensors",

|

| 91 |

+

"model.layers.15.self_attn.k_proj.weight": "model-00004-of-00014.safetensors",

|

| 92 |

+

"model.layers.15.self_attn.o_proj.weight": "model-00004-of-00014.safetensors",

|

| 93 |

+

"model.layers.15.self_attn.q_norm.weight": "model-00004-of-00014.safetensors",

|

| 94 |

+

"model.layers.15.self_attn.q_proj.weight": "model-00004-of-00014.safetensors",

|

| 95 |

+

"model.layers.15.self_attn.v_proj.weight": "model-00004-of-00014.safetensors",

|

| 96 |

+

"model.layers.16.mlp.down_proj.weight": "model-00004-of-00014.safetensors",

|

| 97 |

+

"model.layers.16.mlp.gate_proj.weight": "model-00004-of-00014.safetensors",

|

| 98 |

+

"model.layers.16.mlp.up_proj.weight": "model-00004-of-00014.safetensors",

|

| 99 |

+

"model.layers.16.post_attention_layernorm.weight": "model-00004-of-00014.safetensors",

|

| 100 |

+

"model.layers.16.post_feedforward_layernorm.weight": "model-00004-of-00014.safetensors",

|

| 101 |

+

"model.layers.16.self_attn.k_norm.weight": "model-00004-of-00014.safetensors",

|

| 102 |

+

"model.layers.16.self_attn.k_proj.weight": "model-00004-of-00014.safetensors",

|

| 103 |

+

"model.layers.16.self_attn.o_proj.weight": "model-00004-of-00014.safetensors",

|

| 104 |

+

"model.layers.16.self_attn.q_norm.weight": "model-00004-of-00014.safetensors",

|

| 105 |

+

"model.layers.16.self_attn.q_proj.weight": "model-00004-of-00014.safetensors",

|

| 106 |

+

"model.layers.16.self_attn.v_proj.weight": "model-00004-of-00014.safetensors",

|

| 107 |

+

"model.layers.17.mlp.down_proj.weight": "model-00004-of-00014.safetensors",

|

| 108 |

+

"model.layers.17.mlp.gate_proj.weight": "model-00004-of-00014.safetensors",

|

| 109 |

+

"model.layers.17.mlp.up_proj.weight": "model-00004-of-00014.safetensors",

|

| 110 |

+

"model.layers.17.post_attention_layernorm.weight": "model-00004-of-00014.safetensors",

|

| 111 |

+

"model.layers.17.post_feedforward_layernorm.weight": "model-00004-of-00014.safetensors",

|

| 112 |

+

"model.layers.17.self_attn.k_norm.weight": "model-00004-of-00014.safetensors",

|

| 113 |

+

"model.layers.17.self_attn.k_proj.weight": "model-00004-of-00014.safetensors",

|

| 114 |

+

"model.layers.17.self_attn.o_proj.weight": "model-00004-of-00014.safetensors",

|

| 115 |

+

"model.layers.17.self_attn.q_norm.weight": "model-00004-of-00014.safetensors",

|

| 116 |

+

"model.layers.17.self_attn.q_proj.weight": "model-00004-of-00014.safetensors",

|

| 117 |

+

"model.layers.17.self_attn.v_proj.weight": "model-00004-of-00014.safetensors",

|

| 118 |

+

"model.layers.18.mlp.down_proj.weight": "model-00004-of-00014.safetensors",

|

| 119 |

+

"model.layers.18.mlp.gate_proj.weight": "model-00004-of-00014.safetensors",

|

| 120 |

+

"model.layers.18.mlp.up_proj.weight": "model-00004-of-00014.safetensors",

|

| 121 |

+

"model.layers.18.post_attention_layernorm.weight": "model-00004-of-00014.safetensors",

|

| 122 |

+

"model.layers.18.post_feedforward_layernorm.weight": "model-00004-of-00014.safetensors",

|

| 123 |

+

"model.layers.18.self_attn.k_norm.weight": "model-00004-of-00014.safetensors",

|

| 124 |

+

"model.layers.18.self_attn.k_proj.weight": "model-00004-of-00014.safetensors",

|

| 125 |

+

"model.layers.18.self_attn.o_proj.weight": "model-00004-of-00014.safetensors",

|

| 126 |

+

"model.layers.18.self_attn.q_norm.weight": "model-00004-of-00014.safetensors",

|

| 127 |

+

"model.layers.18.self_attn.q_proj.weight": "model-00004-of-00014.safetensors",

|

| 128 |

+

"model.layers.18.self_attn.v_proj.weight": "model-00004-of-00014.safetensors",

|

| 129 |

+

"model.layers.19.mlp.down_proj.weight": "model-00005-of-00014.safetensors",

|

| 130 |

+

"model.layers.19.mlp.gate_proj.weight": "model-00005-of-00014.safetensors",

|

| 131 |

+

"model.layers.19.mlp.up_proj.weight": "model-00005-of-00014.safetensors",

|

| 132 |

+

"model.layers.19.post_attention_layernorm.weight": "model-00005-of-00014.safetensors",

|

| 133 |

+

"model.layers.19.post_feedforward_layernorm.weight": "model-00005-of-00014.safetensors",

|

| 134 |

+

"model.layers.19.self_attn.k_norm.weight": "model-00004-of-00014.safetensors",

|

| 135 |

+

"model.layers.19.self_attn.k_proj.weight": "model-00004-of-00014.safetensors",

|

| 136 |

+

"model.layers.19.self_attn.o_proj.weight": "model-00004-of-00014.safetensors",

|

| 137 |

+

"model.layers.19.self_attn.q_norm.weight": "model-00004-of-00014.safetensors",

|

| 138 |

+

"model.layers.19.self_attn.q_proj.weight": "model-00004-of-00014.safetensors",

|

| 139 |

+

"model.layers.19.self_attn.v_proj.weight": "model-00004-of-00014.safetensors",

|

| 140 |

+

"model.layers.2.mlp.down_proj.weight": "model-00001-of-00014.safetensors",

|

| 141 |

+

"model.layers.2.mlp.gate_proj.weight": "model-00001-of-00014.safetensors",

|

| 142 |

+

"model.layers.2.mlp.up_proj.weight": "model-00001-of-00014.safetensors",

|

| 143 |

+

"model.layers.2.post_attention_layernorm.weight": "model-00001-of-00014.safetensors",

|

| 144 |

+

"model.layers.2.post_feedforward_layernorm.weight": "model-00001-of-00014.safetensors",

|

| 145 |

+

"model.layers.2.self_attn.k_norm.weight": "model-00001-of-00014.safetensors",

|

| 146 |

+

"model.layers.2.self_attn.k_proj.weight": "model-00001-of-00014.safetensors",

|

| 147 |

+

"model.layers.2.self_attn.o_proj.weight": "model-00001-of-00014.safetensors",

|

| 148 |

+

"model.layers.2.self_attn.q_norm.weight": "model-00001-of-00014.safetensors",

|

| 149 |

+

"model.layers.2.self_attn.q_proj.weight": "model-00001-of-00014.safetensors",

|

| 150 |

+

"model.layers.2.self_attn.v_proj.weight": "model-00001-of-00014.safetensors",

|

| 151 |

+

"model.layers.20.mlp.down_proj.weight": "model-00005-of-00014.safetensors",

|

| 152 |

+

"model.layers.20.mlp.gate_proj.weight": "model-00005-of-00014.safetensors",

|

| 153 |

+

"model.layers.20.mlp.up_proj.weight": "model-00005-of-00014.safetensors",

|

| 154 |

+

"model.layers.20.post_attention_layernorm.weight": "model-00005-of-00014.safetensors",

|

| 155 |

+

"model.layers.20.post_feedforward_layernorm.weight": "model-00005-of-00014.safetensors",

|

| 156 |

+

"model.layers.20.self_attn.k_norm.weight": "model-00005-of-00014.safetensors",

|

| 157 |

+

"model.layers.20.self_attn.k_proj.weight": "model-00005-of-00014.safetensors",

|

| 158 |

+

"model.layers.20.self_attn.o_proj.weight": "model-00005-of-00014.safetensors",

|

| 159 |

+

"model.layers.20.self_attn.q_norm.weight": "model-00005-of-00014.safetensors",

|

| 160 |

+

"model.layers.20.self_attn.q_proj.weight": "model-00005-of-00014.safetensors",

|

| 161 |

+

"model.layers.20.self_attn.v_proj.weight": "model-00005-of-00014.safetensors",

|

| 162 |

+

"model.layers.21.mlp.down_proj.weight": "model-00005-of-00014.safetensors",

|

| 163 |

+

"model.layers.21.mlp.gate_proj.weight": "model-00005-of-00014.safetensors",

|

| 164 |

+

"model.layers.21.mlp.up_proj.weight": "model-00005-of-00014.safetensors",

|

| 165 |

+

"model.layers.21.post_attention_layernorm.weight": "model-00005-of-00014.safetensors",

|

| 166 |

+

"model.layers.21.post_feedforward_layernorm.weight": "model-00005-of-00014.safetensors",

|

| 167 |

+

"model.layers.21.self_attn.k_norm.weight": "model-00005-of-00014.safetensors",

|

| 168 |

+

"model.layers.21.self_attn.k_proj.weight": "model-00005-of-00014.safetensors",

|

| 169 |

+

"model.layers.21.self_attn.o_proj.weight": "model-00005-of-00014.safetensors",

|

| 170 |

+

"model.layers.21.self_attn.q_norm.weight": "model-00005-of-00014.safetensors",

|

| 171 |

+

"model.layers.21.self_attn.q_proj.weight": "model-00005-of-00014.safetensors",

|

| 172 |

+

"model.layers.21.self_attn.v_proj.weight": "model-00005-of-00014.safetensors",

|

| 173 |

+

"model.layers.22.mlp.down_proj.weight": "model-00005-of-00014.safetensors",

|

| 174 |

+

"model.layers.22.mlp.gate_proj.weight": "model-00005-of-00014.safetensors",

|

| 175 |

+

"model.layers.22.mlp.up_proj.weight": "model-00005-of-00014.safetensors",

|

| 176 |

+

"model.layers.22.post_attention_layernorm.weight": "model-00005-of-00014.safetensors",

|

| 177 |

+

"model.layers.22.post_feedforward_layernorm.weight": "model-00005-of-00014.safetensors",

|

| 178 |

+

"model.layers.22.self_attn.k_norm.weight": "model-00005-of-00014.safetensors",

|

| 179 |

+

"model.layers.22.self_attn.k_proj.weight": "model-00005-of-00014.safetensors",

|

| 180 |

+

"model.layers.22.self_attn.o_proj.weight": "model-00005-of-00014.safetensors",

|

| 181 |

+

"model.layers.22.self_attn.q_norm.weight": "model-00005-of-00014.safetensors",

|

| 182 |

+

"model.layers.22.self_attn.q_proj.weight": "model-00005-of-00014.safetensors",

|

| 183 |

+

"model.layers.22.self_attn.v_proj.weight": "model-00005-of-00014.safetensors",

|

| 184 |

+

"model.layers.23.mlp.down_proj.weight": "model-00005-of-00014.safetensors",

|

| 185 |

+

"model.layers.23.mlp.gate_proj.weight": "model-00005-of-00014.safetensors",

|

| 186 |

+

"model.layers.23.mlp.up_proj.weight": "model-00005-of-00014.safetensors",

|

| 187 |

+

"model.layers.23.post_attention_layernorm.weight": "model-00005-of-00014.safetensors",