license: mit

Cogent csp series--Cogent-csp-1m

--The most powerful on-device AI by using the most advanced tech.

Overview

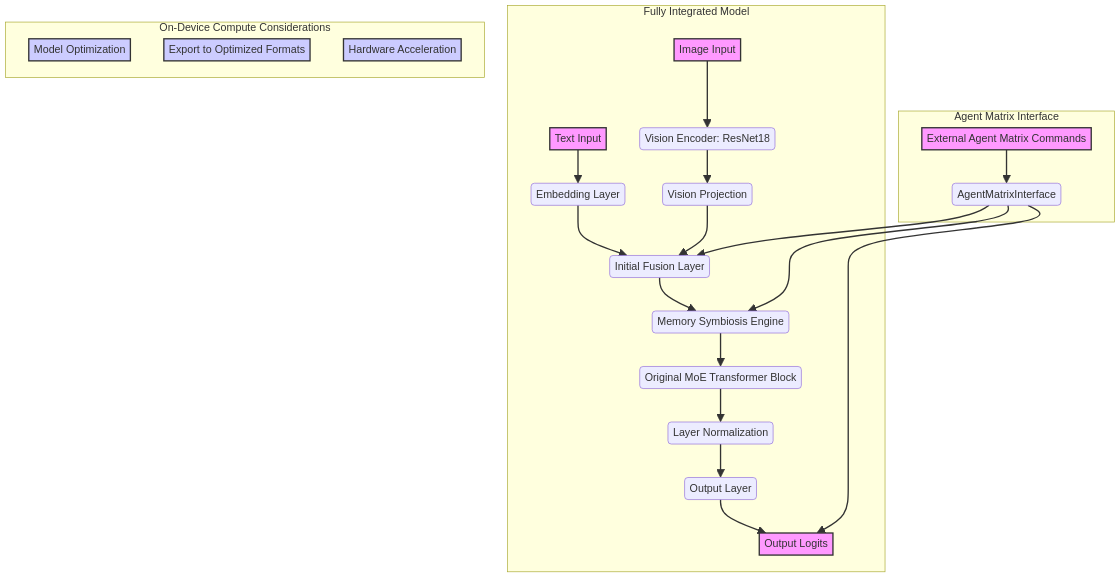

This project aims to develop an advanced multimodal Mixture-of-Experts (MoE) model that not only processes text and images but also deeply integrates three forward-looking strategies: "On-Device Compute" (Edge AI Computing), "Person X Memory Symbiosis Engine" (New Perception), and "Agent Matrix Intelligent Agent Ecosystem Framework" (New Ecosystem). Evolved from a foundational MoE Transformer architecture, this model seeks to explore the potential of next-generation AI systems in perception, memory, computation, and collaboration, providing users with more intelligent, personalized, and efficient services.

Model Features and Innovations

1. Core Architecture: Vision-Enhanced MoE Transformer

The model's core is based on an MoE Transformer block, capable of efficiently processing and fusing multimodal information. Building upon this, we have incorporated a pre-trained ResNet18 as a vision encoder, enabling it to extract rich visual features from images and fuse them with text embeddings. This design ensures the model's robust capabilities in understanding complex semantics and visual content.

2. On-Device Compute (New Computing Paradigm)

Concept: On-Device Compute refers to executing AI computations directly on end-user devices (such as smartphones, IoT devices) rather than relying on cloud services. This offers significant advantages including low latency, enhanced privacy protection, reduced bandwidth consumption, and offline availability [1, 2].

Integration Strategy:

The design of this model takes into account the requirements of On-Device Compute:

- Lightweight Potential: By controlling the total number of model parameters (approximately 13.69M total parameters, with about 2.12M trainable parameters), a solid foundation is laid for subsequent deployment optimizations.

- Deployment Optimization: During actual deployment, the model will undergo a series of optimizations to maximize its operational efficiency on resource-constrained devices. These include Quantization (converting model weights and activations to lower precision, such as INT8, to reduce model size and accelerate inference), Pruning (removing redundant connections or neurons to make the model sparse), and Conversion to Optimized Formats (such as ONNX, TensorFlow Lite, Core ML) [1].

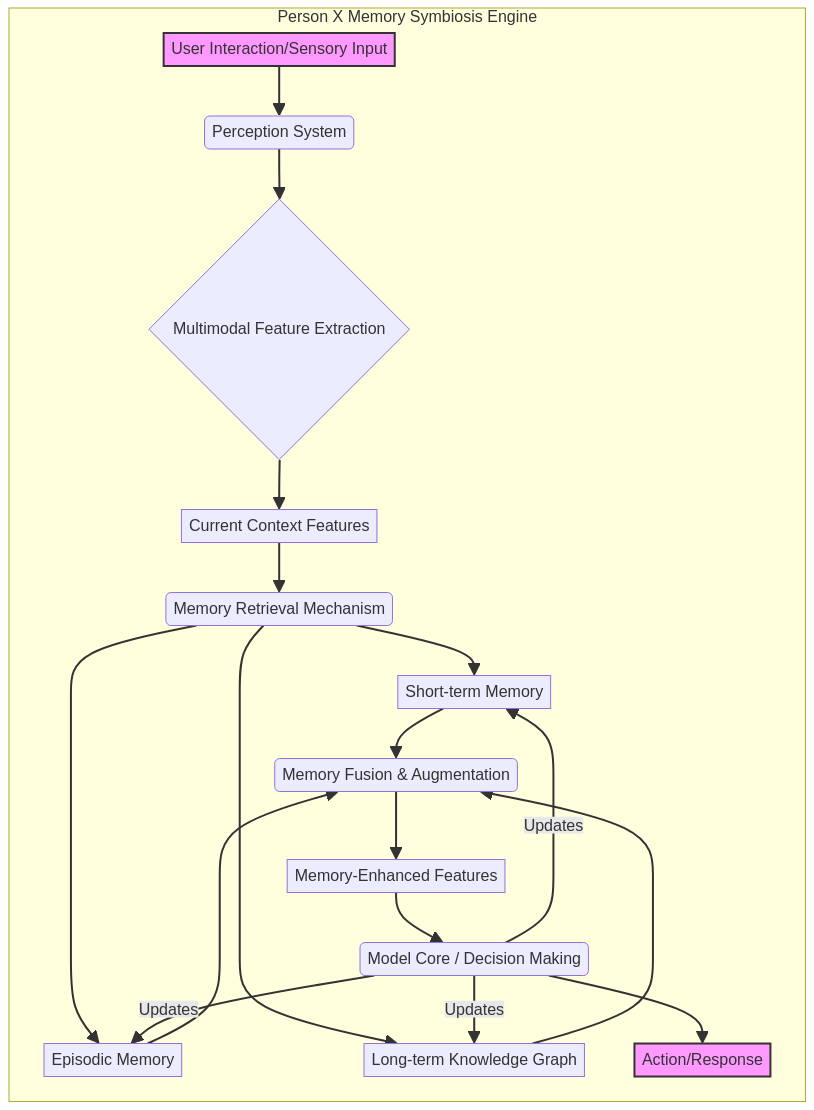

3. Person X Memory Symbiosis Engine (New Perception Paradigm)

Concept: The Person X Memory Symbiosis Engine is a user-centric multimodal memory system designed to build and maintain a lifelong memory repository that co-evolves with the user, achieved through continuous, ubiquitous, and multi-dimensional perception of user behavior [3]. It goes beyond traditional single interactions, enabling AI to understand and remember user preferences, habits, and contextual information.

Integration Strategy:

The model incorporates a MemorySymbiosisEngine module, which implements memory storage, retrieval, and fusion:

- Memory Module: Contains learnable memory keys (

memory_keys) and memory values (memory_values) used to store user historical information or general knowledge. - Attention-based Retrieval: Through an attention-based memory reader, the model intelligently retrieves the most relevant information from memory based on the currently fused features (text and visual).

- Memory Augmentation: The retrieved memory information is fused with current features to generate "memory-enhanced features," which are then fed into the MoE Transformer Block to influence the model's inference and decision-making. This allows the model to leverage rich contextual memory during inference.

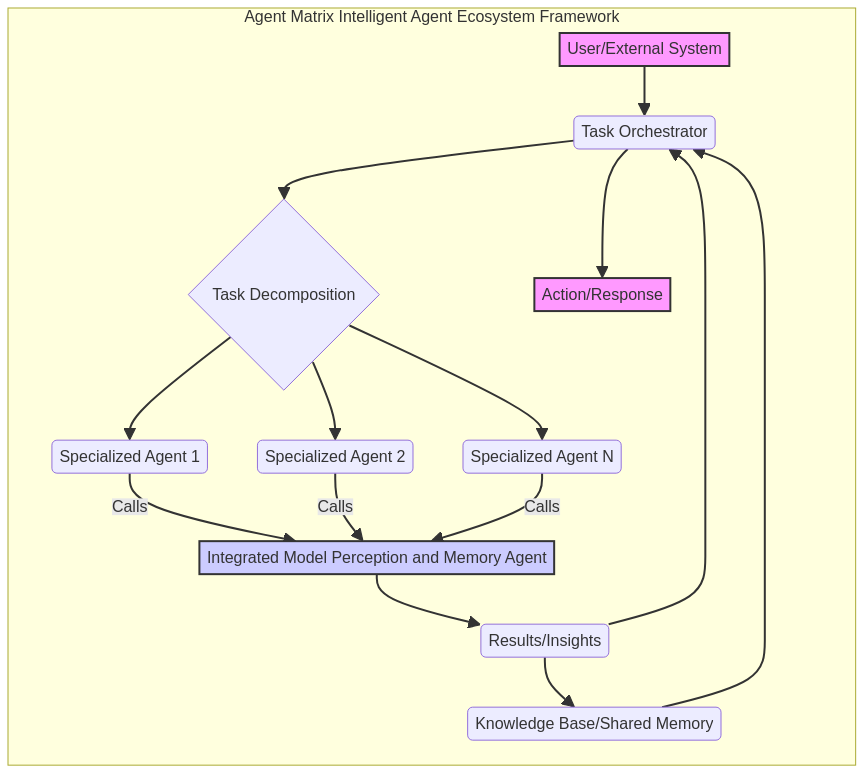

4. Agent Matrix Intelligent Agent Ecosystem Framework (New Ecosystem Paradigm)

Concept: The Agent Matrix Intelligent Agent Ecosystem Framework is a collaborative system for developing, deploying, and managing multiple AI agents. It allows different AI agents to interact, share information, and collectively accomplish complex tasks, thereby forming a powerful and flexible intelligent agent ecosystem [4].

Integration Strategy:

This model is designed to be a core agent within the Agent Matrix framework:

AgentMatrixInterface: The model is encapsulated via theAgentMatrixInterfaceclass, enabling it to receive commands from the Agent Matrix framework (e.g.,analyze_image_text,retrieve_memory,generate_response) and return corresponding processing results.- Perception and Understanding Agent: Within the ecosystem of the Agent Matrix framework, this model acts as a "Perception and Understanding Agent." It is responsible for processing multimodal inputs, utilizing its visual, linguistic, and memory capabilities for deep information processing and inference.

- Collaboration Potential: Through standardized interfaces, this model can seamlessly collaborate with other specialized agents within the Agent Matrix framework (e.g., planning agents, execution agents) to solve larger, more complex user tasks.

Model Architecture Diagram

The diagram below illustrates the overall architecture of the Fully Integrated Multimodal MoE Model and how its various modules work together:

graph TD

subgraph "Fully Integrated Model"

A[Text Input] --> B(Embedding Layer)

C[Image Input] --> D(Vision Encoder: ResNet18)

D --> E(Vision Projection)

B & E --> F(Initial Fusion Layer)

F --> G(Memory Symbiosis Engine)

G --> H(Original MoE Transformer Block)

H --> I(Layer Normalization)

I --> J(Output Layer)

J --> K[Output Logits]

end

subgraph "Agent Matrix Interface"

L[External Agent Matrix Commands] --> M(AgentMatrixInterface)

M --> F

M --> K

M --> G

end

subgraph "On-Device Compute Considerations"

N[Model Optimization]

O[Export to Optimized Formats]

P[Hardware Acceleration]

end

style A fill:#f9f,stroke:#333,stroke-width:2px

style C fill:#f9f,stroke:#333,stroke-width:2px

style K fill:#f9f,stroke:#333,stroke-width:2px

style L fill:#f9f,stroke:#333,stroke-width:2px

style N fill:#ccf,stroke:#333,stroke-width:2px

style O fill:#ccf,stroke:#333,stroke-width:2px

style P fill:#ccf,stroke:#333,stroke-width:2px

Concept Diagrams

Person X Memory Symbiosis Engine Concept

The following diagram illustrates the core workflow of the Person X Memory Symbiosis Engine, showing how it perceives, remembers, and utilizes information from user interactions:

graph TD

subgraph "Person X Memory Symbiosis Engine"

A[User Interaction/Sensory Input] --> B(Perception System)

B --> C{Multimodal Feature Extraction}

C --> D[Current Context Features]

D --> E(Memory Retrieval Mechanism)

E --> F[Short-term Memory]

E --> G[Episodic Memory]

E --> H[Long-term Knowledge Graph]

F & G & H --> I(Memory Fusion & Augmentation)

I --> J[Memory-Enhanced Features]

J --> K(Model Core / Decision Making)

K --> L[Action/Response]

K -- Updates --> F

K -- Updates --> G

K -- Updates --> H

end

style A fill:#f9f,stroke:#333,stroke-width:2px

style L fill:#f9f,stroke:#333,stroke-width:2px

Agent Matrix Intelligent Agent Ecosystem Framework Concept

The diagram below demonstrates how the Agent Matrix Intelligent Agent Ecosystem Framework orchestrates multiple agents, including our Fully Integrated Model, to accomplish complex tasks:

graph TD

subgraph "Agent Matrix Intelligent Agent Ecosystem Framework"

A[User/External System] --> B(Task Orchestrator)

B --> C{Task Decomposition}

C --> D(Specialized Agent 1)

C --> E(Specialized Agent 2)

C --> F(Specialized Agent N)

D -- Calls --> G[Integrated Model Perception and Memory Agent]

E -- Calls --> G

F -- Calls --> G

G --> H(Results/Insights)

H --> I(Knowledge Base/Shared Memory)

I --> B

H --> B

B --> J[Action/Response]

end

style A fill:#f9f,stroke:#333,stroke-width:2px

style J fill:#f9f,stroke:#333,stroke-width:2px

style G fill:#ccf,stroke:#333,stroke-width:2px

How to Use

You can use the FullyIntegratedModel class in the integrated_model_design.py script to load and utilize the new model. The forward method of this model now accepts text_input (token IDs) and image_input (image tensors). Through the AgentMatrixInterface, you can simulate various commands to test the model's perception, memory, and response capabilities.

Example Code Snippet (from integrated_model_design.py):

import torch

import torch.nn as nn

from safetensors.torch import save_file as safetensors_save_file

from torchvision import models, transforms

from PIL import Image

import safetensors

import os

# ... (Model definition, please refer to the full content of integrated_model_design.py) ...

if __name__ == "__main__":

original_model_path = "/home/ubuntu/upload/moe_model.safetensors"

vocab_size = 10000 # Example vocabulary size

embedding_dim = 64 # Embedding dimension

moe_hidden_dim = 192

num_experts = 16

visual_feature_dim = 256

memory_slots = 10

memory_dim = 256

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

integrated_model = FullyIntegratedModel(

original_model_path=original_model_path,

vocab_size=vocab_size,

embedding_dim=embedding_dim,

moe_hidden_dim=moe_hidden_dim,

num_experts=num_experts,

visual_feature_dim=visual_feature_dim,

memory_slots=memory_slots,

memory_dim=memory_dim

).to(device)

integrated_model.eval()

agent_interface = AgentMatrixInterface(integrated_model)

dummy_text_input = torch.tensor([[100]], dtype=torch.long).to(device)

dummy_image_input = torch.randn(1, 3, 224, 224).to(device)

# Simulate 'analyze_image_text' command

fused_features = agent_interface(command="analyze_image_text", text_input=dummy_text_input, image_input=dummy_image_input)

print(f"Analyzed features shape: {fused_features.shape}")

# Simulate 'generate_response' command

output_logits = agent_interface(command="generate_response", text_input=dummy_text_input, image_input=dummy_image_input)

print(f"Generated response logits shape: {output_logits.shape}")

# Simulate 'retrieve_memory' command

retrieved_memory = agent_interface(command="retrieve_memory", query_text_input=dummy_text_input, query_image_input=dummy_image_input)

print(f"Retrieved memory shape: {retrieved_memory.shape}")

# Save the integrated model

# ... (Saving code, please refer to the full content of integrated_model_design.py) ...

print("Fully integrated model saved to fully_integrated_model.safetensors")

File List

integrated_model_design.py: Python script implementing the integration logic described above.fully_integrated_model.safetensors: The SAFETENSORS file containing the weights of the integrated model.model_architecture.png: Diagram illustrating the overall model architecture.memory_engine_concept.png: Concept diagram for the Person X Memory Symbiosis Engine.agent_matrix_concept.png: Concept diagram for the Agent Matrix Intelligent Agent Ecosystem Framework.on_device_compute_research.md: Research report on On-Device Compute.person_x_memory_symbiosis_engine_research.md: Research report on the Person X Memory Symbiosis Engine.agent_matrix_research.md: Research report on the Agent Matrix Intelligent Agent Ecosystem Framework.

References

[1] N-iX. (2024). On-device AI: Benefits, applications, use cases. https://www.n-ix.com/on-device-ai/ [2] Red Hat. (2023). What is Edge AI?. https://www.redhat.com/zh-cn/topics/edge-computing/what-is-edge-ai [3] Sina Finance. (2025). OPPO AI Unveils New Strategy, Building a Symbiotic Intelligent System with Users. https://finance.sina.com.cn/roll/2025-10-17/doc-infufkhk6997878.shtml [4] CSDN. (2024). Agent Intelligent Body Development Framework Selection Guide Original. https://blog.csdn.net/Baihai_IDP/article/details/143587116